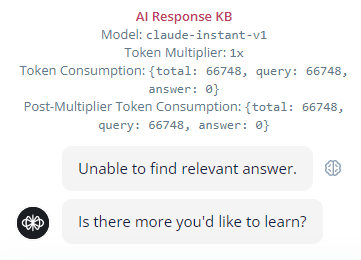

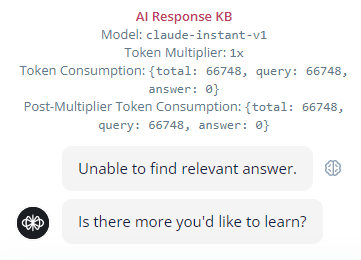

unable to find relevant answer

I have a good kb of docs, but when i ask the bot questions, it keeps saying unable to find relevant answer. and it consumes over 65000 tokens without any help. What is going on? Please help.

# Let your agents search the web 🔎 Hey everyone! We just released the web search tool for the agent step! Your agents can now automatically search the web for information, letting your agent supplement the LLM's knowledge and the data in its knowledge base with live, up-to-date information. Plus.... * You can restrict searches to specific domains, so your agent only searches sites that you own * This is a tool, so you remain in control of when the agent searches the web * Results are automatically summarized in a way that your agent can automatically understand Under the hood, we're using OpenAI's web search API. Give it a try, and let us know what you think! https://docs.voiceflow.com/changelog/native-web-search-tool

jacklyn · 4mo ago

jacklynbiggin's Thread

jacklyn · 4mo ago

connor_maclean's Thread

hurt-tomato · 4mo ago