LLM Intent "listening" on every response

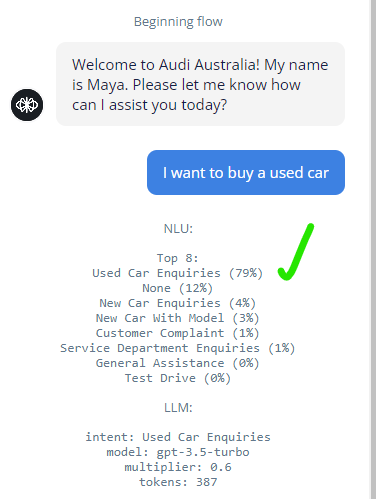

I'm testing out the new LLM intents, and it seems to be working great from an "intent trigger" standpoint.

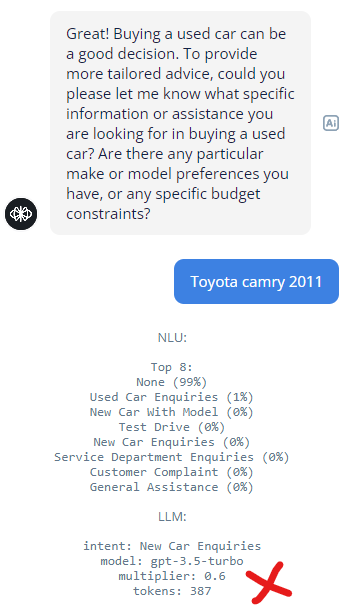

But I noticed as I continued through the conversation, every single step of the assistant picks up the NLU + LLM Combination.

Text block, Response AI block, capture, doesn't matter. Every single step of the conversation is charging me with a token hit of 384.

Is this working as intended, or am I missing something?

387 multiplied by every route in the conversation is kind of insane, especially when I only need to access the Intents in a choice step.

But I noticed as I continued through the conversation, every single step of the assistant picks up the NLU + LLM Combination.

Text block, Response AI block, capture, doesn't matter. Every single step of the conversation is charging me with a token hit of 384.

Is this working as intended, or am I missing something?

387 multiplied by every route in the conversation is kind of insane, especially when I only need to access the Intents in a choice step.