ltd-scarlet

What’s causing LLM Response Timeout?

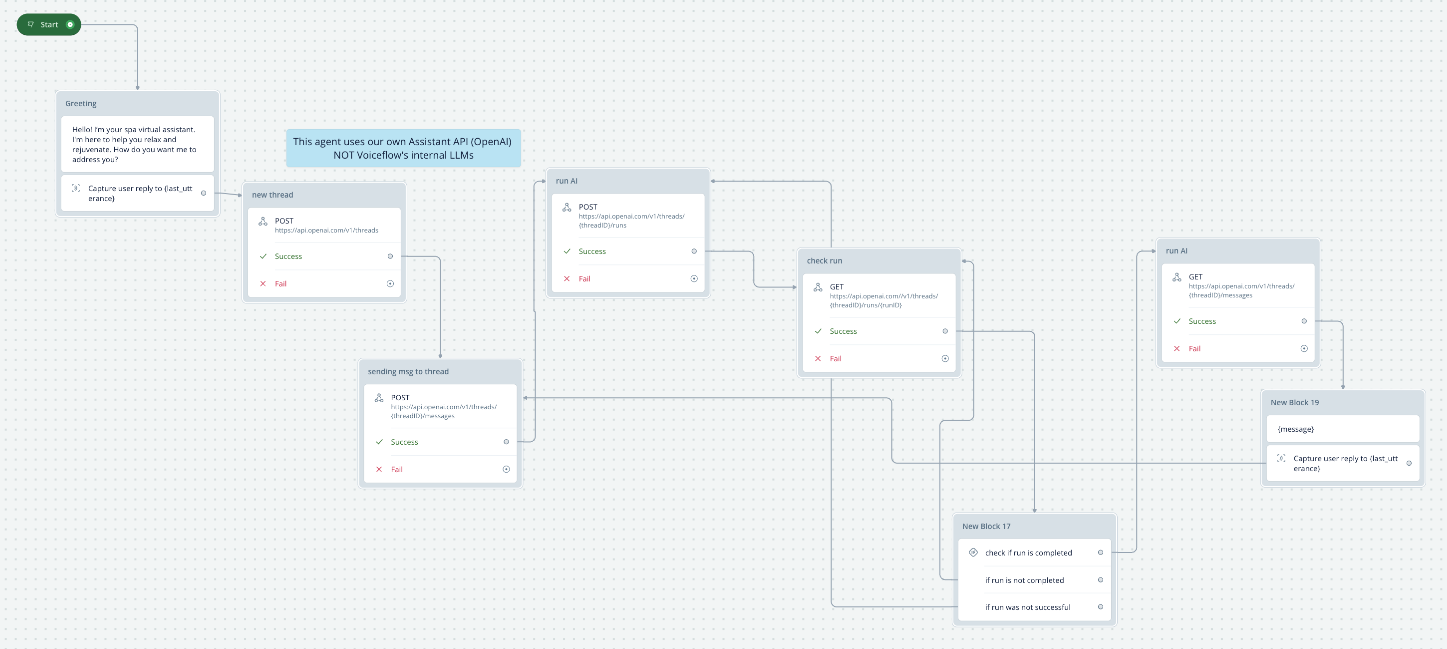

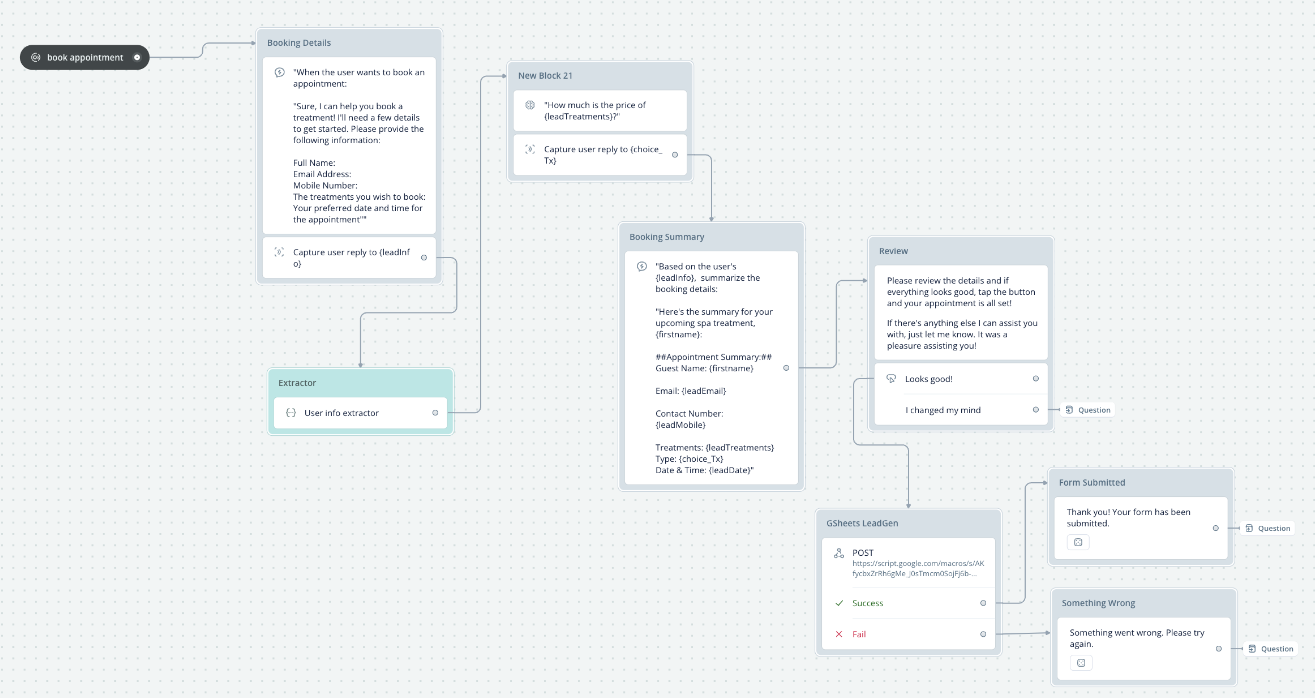

Hello. I’m just a newbie here so forgive me if my question is so basic. I’m using my own Assitants API in the first part of my workflow (screenshot 1) but I also made an Intent for “book Appointment” (screenshot 2) but I’m using VF’s LLMs for intent classification.

It was working well earliee but after a few hours, I started receiving LLM Response Timeout and the conversations ended.

1) what could be the cause?

2) is it because I exceeded 2M tokens already?

3) is there a way for me to totally access my own OpenAI API Assistant so that I’ll use my token allowance majority of the time?

Thank you for all your help. This is all new to me.

It was working well earliee but after a few hours, I started receiving LLM Response Timeout and the conversations ended.

1) what could be the cause?

2) is it because I exceeded 2M tokens already?

3) is there a way for me to totally access my own OpenAI API Assistant so that I’ll use my token allowance majority of the time?

Thank you for all your help. This is all new to me.