dual-salmon

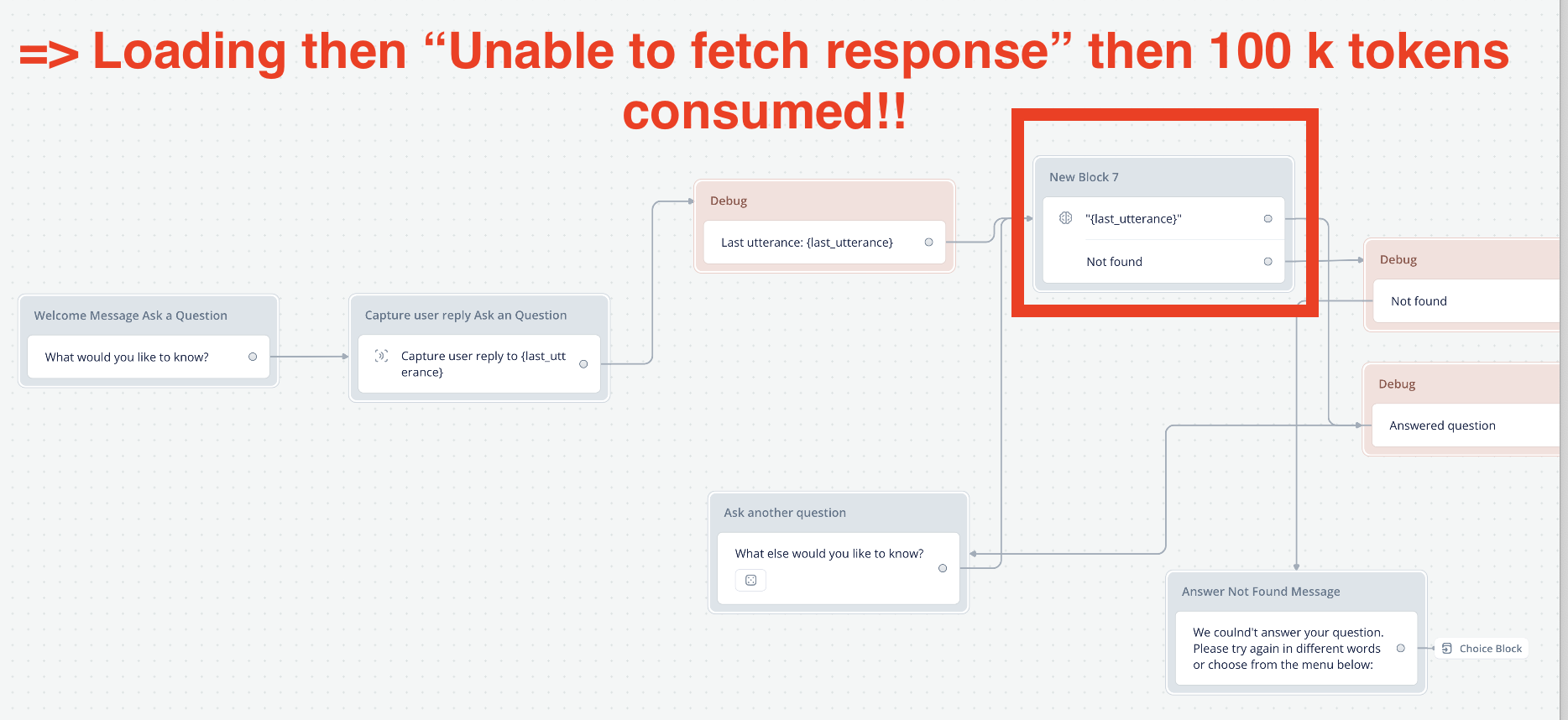

Scary high token consumption

Hello I upgraded 1 hour ago (token consumption was 0 then) and did some tests to my (unpublished) chatbot.

After a few tests it was 69k tokens, then 177k and then suddenly 261k

I only used simple blocks with

- text

- capture user reply

- response AI with knowledge base

My model is GPT3.5

It kept loading for a while then said "Unable to fetch response.

Now I tried it again with and had the same problem.

It jumped from 262k to 362k tokens just from the attached workflow.

This loop was working before, I am confused and also hate the fact that I consumed 100k in one minute of loading.

After a few tests it was 69k tokens, then 177k and then suddenly 261k

I only used simple blocks with

- text

- capture user reply

- response AI with knowledge base

My model is GPT3.5

It kept loading for a while then said "Unable to fetch response.

Now I tried it again with and had the same problem.

It jumped from 262k to 362k tokens just from the attached workflow.

This loop was working before, I am confused and also hate the fact that I consumed 100k in one minute of loading.