AI responses just randomly stop mid-sentence.

I'm looking to achieve a continuous conversation thread with the chatbot.

The issue is that the chatbot just stops mid-sentence throughout the conversation.

I have tried adjusting the agent by editing the script details. No avail.

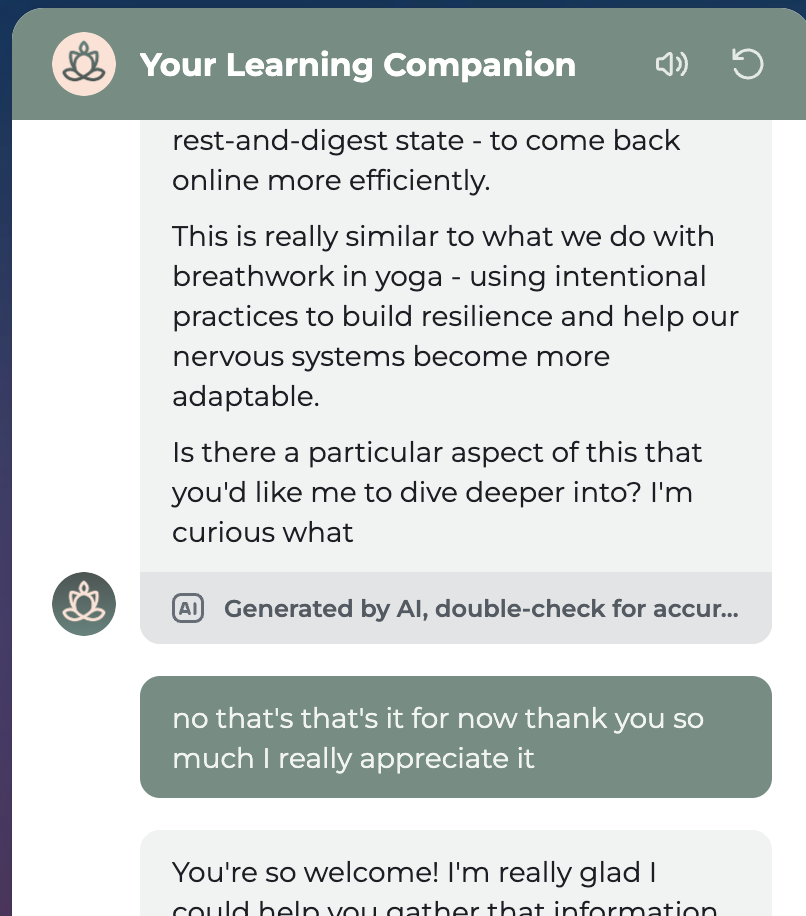

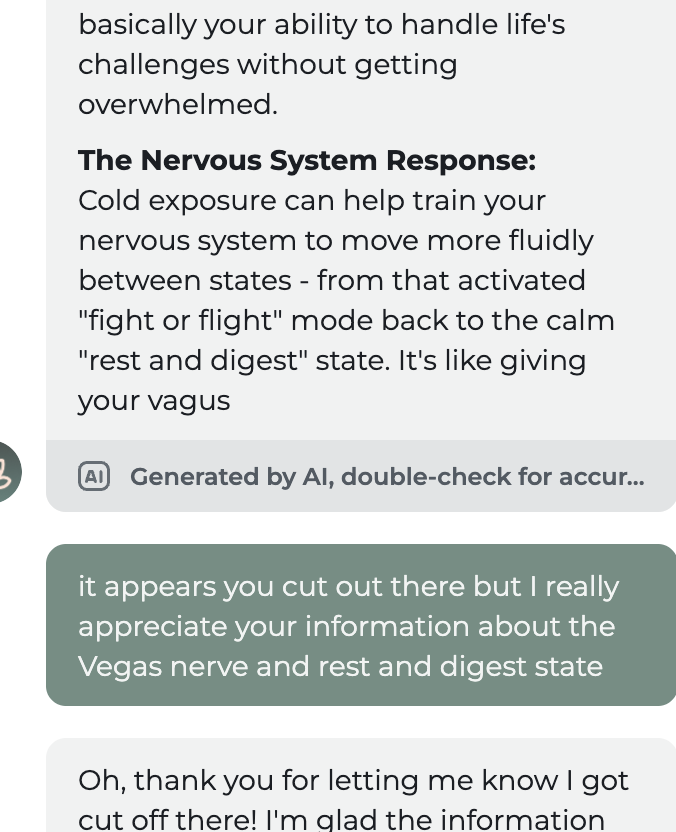

Here's an example of text from the AI agent, "Is there a particular aspect of this that you'd like me to dive deeper into? I'm curious what." And that's it. It doesn't finish the sentence.

Any help is appreciated!

The issue is that the chatbot just stops mid-sentence throughout the conversation.

I have tried adjusting the agent by editing the script details. No avail.

Here's an example of text from the AI agent, "Is there a particular aspect of this that you'd like me to dive deeper into? I'm curious what." And that's it. It doesn't finish the sentence.

Any help is appreciated!